Feyn - Symbolic AI using the QLattice

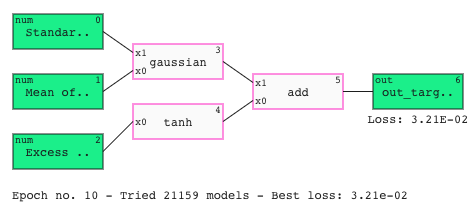

The QLattice is an explainable symbolic AI that accelerates research and scientific discovery.

With Feyn, exploring and understanding relationships in your data is made easy, putting the science in data science.

Quick start

Quick start

From zero to scientific hero.

Just pip install feyn.

Using Feyn

Using Feyn

Get started with complete examples for classification and regression.

Feyn in 5 minutesAPI reference

API reference

The Feyn Python module in all of its nitty-gritty details.

API ReferenceExtra, extra!

Feyn 3.3.0 is out!

Feyn 3.3.0 is out!

We've added an automatic response plot to make it easier to compare and understand your models.

We've also added support for the upcoming release of numpy, so you're ready to go when it hits.

See the full changelog here

In other news...

Read about our other recent new features and additions to the Feyn Documentation.

You can also check out our changelog here.

Insert data -

press play!

Insert data -

press play!

Get started even faster with automatic type detection for your data when using Auto Run.

Get going with our Beginner Tutorials for common cases

Learn the QLattice workflow through solving well-known problems.

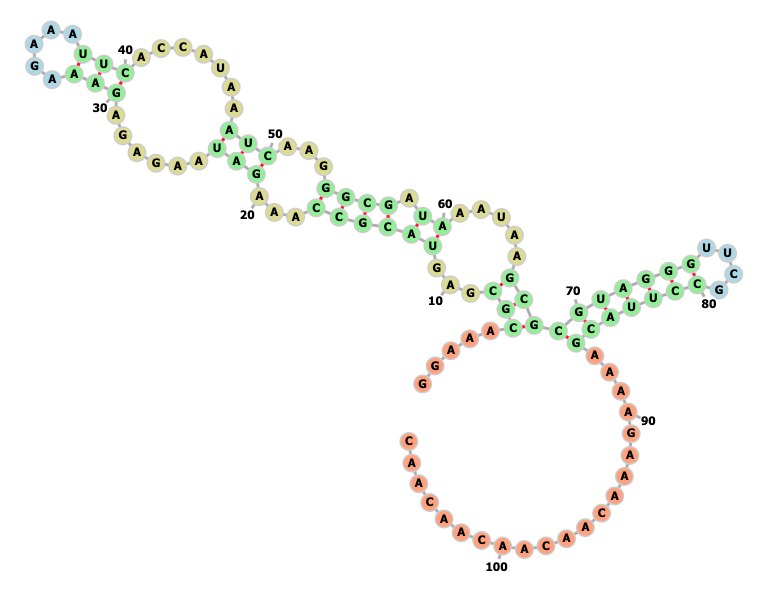

Dust off your QLattice skills in life sciences

Explore how you can use the QLattice to solve problems in life science.

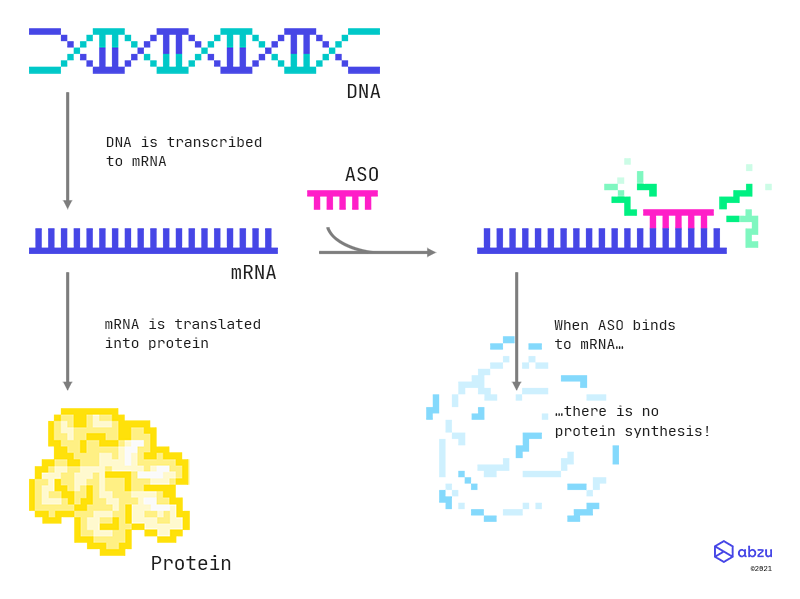

Feyn and R for ASOs

Feyn and R for ASOs

Explore antisense oligonucleotides in your familiar workflow combining Feyn with R.